Always A/B test your landing pages

A/B testing removes guesswork and shows you what actually improves conversions. Learn what to test, how to run reliable experiments and why 95 percent significance matters.

This is tip #8 in an 8-part Ultimate Guide to Conversion Optimization. Want the full guide delivered to your inbox?

Get it here →

Even well-designed landing pages are based on assumptions. You might think a headline is clear, or that a CTA is strong, or that a layout “should” work. But the reality is simple:

You don’t know what works until you test it.

A/B testing removes guesswork and replaces it with evidence. It reveals what actually influences conversions and helps you make confident decisions that improve performance over time.

This ties directly back to Tip #3 (blueprint) and Tip #4 (message match). Once you have the structure and message aligned, testing helps you refine what works best for your audience.

Why A/B testing matters

- Your assumptions might be wrong

- Users don’t behave the way you expect

- Good ideas sometimes fail

- Small improvements multiply over time

- Testing protects you from making expensive mistakes

Testing gives you clarity instead of guesswork.

What you can test

These categories create the biggest impact:

1. Messaging

- Headlines

- Subheadlines

- Value propositions

- CTA text

2. Visual elements

- Hero images

- CTA styling

- Contrast and encapsulation

- Directional cues

3. Page experience

- Layout structure

- Form length

- Navigation removal

- Spacing and hierarchy

4. Offer and incentive

- Free trial vs free demo

- Different lengths of trial

- Lead magnet vs audit

Each test should focus on one meaningful change, not a full redesign.

The importance of statistical significance

Not every uplift is real. Some results happen by chance.

That’s why you need statistical significance — a way to validate whether your variant truly outperformed your control.

The general rule:

👉 Wait until you reach 95 percent significance or higher before declaring a winner.

Why 95 percent?

Because it gives you a high level of confidence that the observed improvement is not random, but driven by your change.

No formulas needed.

Just wait for a clear, reliable signal.

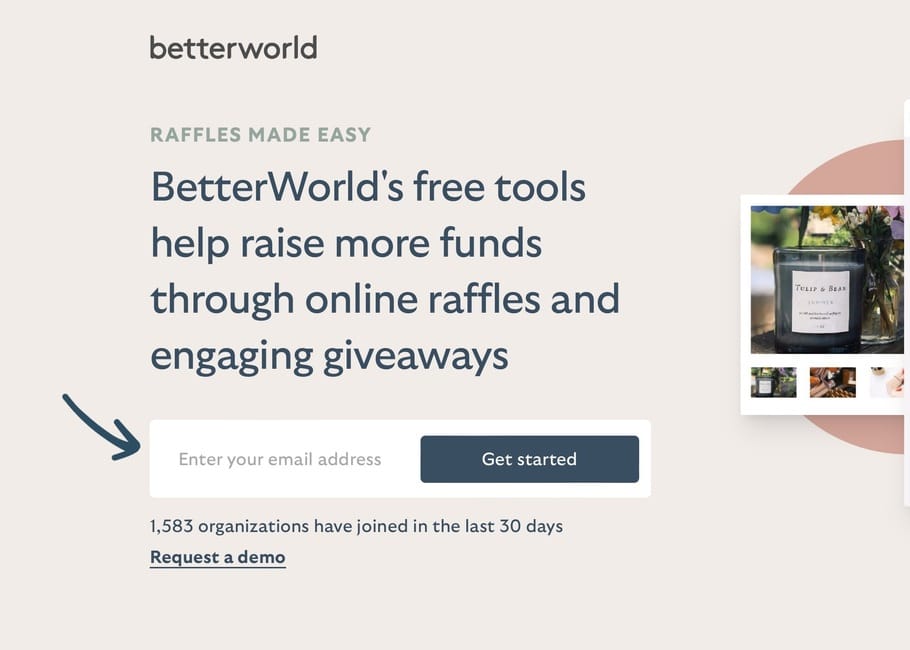

A real example: 18 percent uplift

Without vs with visual guidence.

In one test, a small visual change led to an 18 percent increase in conversions.

The only reason we trusted it — and kept the winning version — was because it reached 95 percent significance.

Small adjustments can create big impact when the test is set up correctly.

How to run a good A/B test

A simple, reliable process:

- Start with a clear hypothesis

Example: “If we simplify the hero, more users will understand the offer and sign up.” - Test one meaningful change

Not multiple changes in the same variant. - Split traffic fairly

Usually 50/50. - Run the test long enough

Avoid stopping early even if results look promising. - Reach 95 percent significance or higher

No winner until then. - Document your learnings

Insights become more valuable than individual wins.

Checklist: is your test valid?

- Only one major change in the variant

- Clear hypothesis

- Fair traffic distribution

- Enough sample size

- Test ran long enough

- Reached at least 95 percent significance

- Learnings documented

Key takeaway

A/B testing is your most reliable way to understand what really drives conversions.

It protects you from assumptions and reveals what your audience actually responds to.

Test confidently, learn continuously and optimise every step of your funnel.

Want all 7 tips in your inbox?

Get The Ultimate Guide to Conversion Optimization – a free 7‑part email series with the most effective CRO tips I’ve learned from 12 years of landing page testing.

Want personal feedback on one of your landing pages?

Send me the URL and I’ll take a look. Quick, actionable and free.

👉 Get your free audit